Unpacking the Environmental Effects of AI

As the world looks to artificial intelligence (AI) to solve many of its challenges, the growing environmental and societal effects of AI have become associated concerns. While AI encompasses a wide range of technologies, generative AI (GenAI) which generates text, images, or other content comes with significant environmental challenges substantially greater than traditional AI systems.

This article focuses on the environmental effects of GenAI in particular, looking at every stage, from the energy used in its development and operation, to the consumption of water and mined materials, to the disposal of old equipment.

The Material Footprint of AI

Like all digital technologies, AI depends on computational power, with GenAI requiring substantially more resources than conventional technologies. Training complex AI models requires vast amounts of resources and specialized hardware as they process massive data sets and perform billions of calculations to make decisions or predictions. These calculations often need thousands of computer processors working in tandem, which requires energy, water for cooling, and production materials.

The energy intensity comes from a combination of the power required for training, energy cost per use, and the accelerating rate of adoption. With more AI applications emerging, the demand for more and faster models is increasing, meaning that the environmental effects are growing as well. Companies are also competing with each other to develop larger and more accurate models to attract consumers. Most AI companies do not disclose or report on the environmental impacts of their systems.

These effects are significant. While GenAI amplifies their magnitude considerably, the underlying issues of resource extraction, energy consumption, and electronic waste reflect broader patterns for digital infrastructure. This suggests that meaningful mitigation will require both AI-specific measures and economy-wide changes in resource extraction, power generation, and recycling.

1. Operational Demands: Energy and Water

While most people use computers daily, not everyone is familiar with the hardware inside, such as a Central Processing Unit (CPU) and a Graphics Processing Unit (GPU), or how much energy they consume.

The CPU is the processor that handles everyday tasks like checking email, browsing the internet, editing documents, and running standard software. It's found in most home and office computers, and typically uses about 1.5 to 2 kilowatt-hours (kWh) of electricity per month. That's roughly equivalent to leaving a small LED lightbulb on continuously for a day, or charging a smartphone daily for seven months.

kWh = Watts x Hours used per day x Days per month ÷ 1000

GPUs, originally built for gaming and video editing, are now vital for AI. Unlike CPUs, GPUs can process thousands of tasks at once, making them ideal for training large AI models. A single high-end GPU running intense video game graphics for just four hours a day can consume around 69 kWh monthly, which can be at least sixteen times the energy of a CPU.

Training large GenAI models, such as OpenAI's ChatGPT-4 or Google's AlphaGo, can require thousands to tens of thousands of GPUs running continuously for several weeks. The electricity used would be enough to power hundreds of Canadian homes for a year. After training, thousands of GPUs remain active to serve millions of users. Training represents about one third of the environmental footprint of an AI model, while this following phase accounts for about two-thirds.

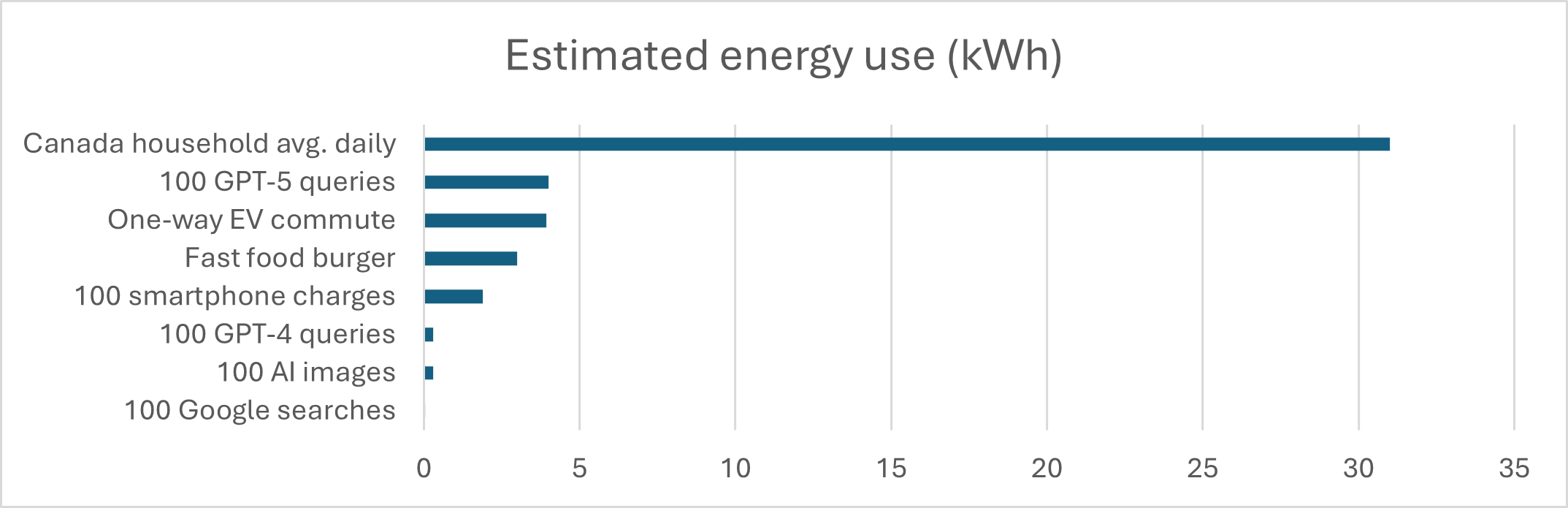

Most AI systems' operations take place in large data centres, which are facilities that store, process, and transmit data, making them a crucial part of the IT infrastructure of large firms. Data centres are also necessary for social media platforms, e-commerce activities, streaming services, and internet search engines. According to Google, a single search query uses about 0.0003 kWh of electricity. With 3.5 billion daily searches, that totals over 1.05 million kWh. In contrast, a single query to an AI like ChatGPT (GPT-4) can use up to 10 times the electricity of a simple Google search, or about 0.0034 kWh. If large language models (LLMs), which generate text based on user queries, replace traditional search engines, this may lead to increased power consumption. The energy consumption of LLMs may also increase by several times as newer, more powerful models are developed. Some estimates expect GPT-5, which was launched in August 2025, to consume over ten times as much energy as GPT-4, around 0.04 kWh.

Image generation is more significant in its energy consumption. Transparent reporting is similarly lacking for this AI application, but estimates place it at around 0.0029 kWh per image.

For comparison, the average Canadian household uses about 11 135 kWh of electricity each year (about 31 kWh a day). This number is based on some assumptions such as an average of 9 kilometres driven per day (a one-way commute in an electric vehicle uses around 3.92 kWh); purchasing a cheeseburger from a fast food chain uses at least 3-5 kWh when production, packaging, refrigeration and distribution are included; and charging a smartphone consumes about 0.019 kWh.

Descriptive Text

Estimated energy use (kWh)

Canada household avg. daily (31 kWh)

100 GPT-5 queries (4 kWh)

One-way EV commute (3.92 kWh)

Fast food burger (3 kWh)

100 smartphone charges (1.9 kWh)

100 GPT-4 queries (0.3 kWh)

100 AI images (0.29 kWh)

100 Google searches (0.03 kWh)

While individual text prompts might not be the most energy-intensive, other GenAI tasks might be more energy dependent (image, video, deep research), and its scale of adoption will make it a major component of our collective emissions in coming years. Data centres may account for 3-4% of global energy consumption by 2030, with AI making up about 19% of that.

Important

Many communities actively protest the construction of data centres in their vicinity. For example, in Canada, Chief Sheldon Sunshine of the Sturgeon Lake Cree wrote an open letter to the Alberta Premier on January 13, 2025, expressing alarm over a proposed mega AI data centre project's potential environmental effects on the ecologically sensitive area, which holds deep importance for the community.

Data centres also use a lot of water to keep their equipment cool, potentially competing with other uses in their vicinity such as residential and farm use. A small 1-megawatt data centre can use nearly 26 million litres of water per year.

In terms of individual use, the generative AI model GPT-3, for example, is estimated to consume 500 ml of water per 10-50 responses. In Canada, the average daily water use in 2021 was 401 litres per person.

2. Production: Material extraction, manufacturing, and distribution

Like with any multi-stage production chain, the environmental effects of AI really begin long before its deployment. The production of computer hardware necessary for AI systems demands considerable amounts of natural resources, which carries a significant environmental footprint. This is not a challenge unique to AI, but shared by all digital technology. For example, a 2 kg computer can require around 800 kg of raw materials. Components needed for AI and other computer technologies, such as microchips, depend heavily on small amounts of critical minerals like cobalt, tungsten, and lithium. Though digital devices contain only grams of these minerals each, global demand is rising sharply. For some, such as cobalt and certain rare earth elements, supply could fall short by 2030.

Important

In 2023, Canada produced over 5,000 tonnes of cobalt, a key material in microchips used for AI technology, with 43% sourced from Newfoundland and Labrador and the remainder from Ontario, Manitoba, and Quebec. Cobalt mining in Canada, a growing industry, presents significant environmental consequences, including habitat destruction, water pollution, and potential contamination with toxic metals. Specifically, historical mining in areas like the Cobalt Mining Camp in northern Ontario has resulted in unremediated mine sites and tailings deposits containing hazardous substances like arsenic, as well as economically valuable metals like cobalt.

3. End-of-life: Disposal and recycling

Rapid AI development drives constant hardware replacement or upgrades, contributing to growing e-waste. Discarded electronics are often improperly disposed of, leaching toxic substances like mercury and lead into the environment. These can contaminate soil and water, posing risks to ecosystems and human health.

Although valuable metals like gold, copper, and rare earth elements are found in computer hardware, only about 22% of e-waste is collected and recycled globally. Data security also complicates the process, as companies must ensure that sensitive information is fully erased before devices are recycled or repurposed. Recycling rates for many rare materials, particularly rare earth minerals, remain too low to meet projected demand.

Experts suggest extending hardware lifespans, refurbishing components, and designing equipment that is easier to recycle. Several of the objectives described in the Government of Canada’s Policy on Green Procurement align with those strategies. If widely implemented, these measures could reduce e-waste by up to 86%. Globally, however, the documented rate of e-waste recycling is only rising a fifth as quickly as e-waste production. A concerted effort from all sectors is needed to achieve a meaningful change.

Important

In Canada, provincial regulations have been introduced to tackle the increasing issue of e-waste. These rules generally place the responsibility for managing end-of-life electronics on the producers (manufacturers and importers) through a Canada-wide Action Plan for Extended Producer Responsibility. As a result, collection and recycling programs have been set up nationwide, providing consumers with convenient options for responsible e-waste disposal. However, despite these efforts, only about 20% of e-waste is effectively recycled.

Global response and regulatory actions

Over 190 countries, including Canada, have agreed to non-binding recommendations on the ethical use of AI, which includes addressing its environmental footprint. For example, UNESCO has also introduced global AI standards that focus on sustainability, and the European Union has imposed sanctions and fines on certain environmental impacts.

In Canada, the Directive on Automated Decision-Making requires departments to assess and publish the environmental impact of AI systems using the Treasury Board's Algorithmic Impact Assessment Tool before deploying any automated system. The AI Strategy for the Federal Public Service 2025-2027, released in March 2025, also emphasizes environmental governance.

As highlighted in the article Dialing Down the Heat with Machine Learning, AI is also being used for research and development that supports sustainability and efficiency. The article details its use in climate change research, such as detecting risks near transport routes, tracking marine life, enabling precision farming, and monitoring water quality.

Conclusion

As AI adoption is accelerating, its environmental effects are drawing increasing attention. While there's widespread assessment that AI investment and adoption will be crucial for countries and organizations, balancing it with sustainability is a rapidly growing concern. The response could include more efficient models, using different technologies for different needs, market forces favouring lower-footprint solutions, or the efficiency of the energy inputs themselves at source.

Resources